AI & Chatbots:

Can a ‘Robot’ Really Relieve Your Mental Stress? 🤖📱

It’s 2 AM. You can’t sleep. Thousands of thoughts are racing through your mind, but your contact list has no one you can text: “Hey, I’m feeling anxious.”

Today, this is the story of millions of young people. In the midst of this loneliness, a new ‘friend’ has emerged — one that is online 24/7, never gets tired, and most importantly, doesn’t judge you. Yes, we’re talking about Artificial Intelligence (AI) and chatbots like ChatGPT or other healthcare bots.

But can a machine without a heart or emotions truly relieve deep depression and mental stress? Is it safe to treat a robot as your therapist? Let’s uncover the full truth of this new trend through the lens of science and the latest global research.

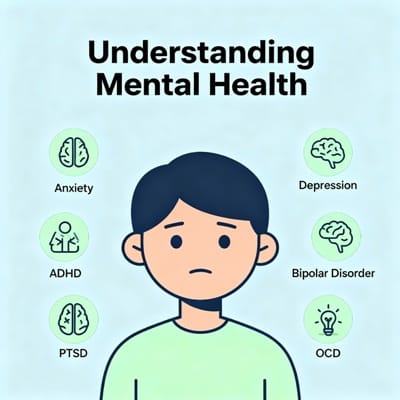

Why Are Young People Opening Up to a ‘Machine’? 💬

You might wonder why anyone would share their troubles with a machine instead of a human. According to a comprehensive study (over 29,000 young people) published in the Journal of Medical Internet Research (JMIR), the biggest advantage of chatbots for teens and young adults is that they are anonymous and non-judgmental. A young person who feels embarrassed about their looks, weight, or body image is often afraid to speak with a doctor or family. But in front of a chatbot, they can share their deepest vulnerabilities without hesitation. Additionally, chatbots are available 24/7 and you can interact at your own pace.

Do AI chatbots actually work? Yes, research shows that AI chatbots are effective in reducing depression, anxiety, stress, and psychosomatic symptoms in young people to a ‘small to moderate’ degree. They have been particularly successful in reducing body image‑related distress and encouraging healthy behaviors (better sleep, stress management). Bots with reminders and daily check‑ins were the most effective.

✔️ Benefits

- 🔒 Complete anonymity & no judgment

- ⏰ 24×7 availability

- 💸 Low cost or free

- 📊 Reminders for behavior change

⚠️ Risks & Limitations

- 💔 Emotional dependence

- 🤖 Robotic / repetitive responses

- 🧠 AI ‘hallucination’ (wrong/dangerous info)

- 🚫 Unable to handle crisis situations

The Dark Side: ‘Emotional Dependence’ & WHO Warning ⚠️

The World Health Organization (WHO) and the European Medical Journal (EMJ) have warned that the increasing use of generative AI should be considered a “public mental health concern”.

- 🧷 Emotional Dependence: Constantly talking to a machine can disconnect young people from real relationships, increasing loneliness.

- 📡 AI without clinical testing: Tools like ChatGPT were never designed for mental health treatment.

- 🤯 AI Hallucination: AI can sometimes present completely wrong or even dangerous information with great confidence. For someone struggling with suicidal thoughts, bad advice could be life‑threatening.

Experts emphasize that AI tools must have a crisis referral framework (immediate referral to human support).

What Users Dislike? (Shortcomings) 🤦♂️

In the JMIR study, young people reported that the biggest problem with chatbots is their “robotic and repetitive” nature. When they share something unexpected or deeply emotional, the bot falls back on the same canned responses. Technical glitches and app crashes also frustrate users, causing them to abandon the app mid‑conversation.

There’s no doubt that AI chatbots are a revolution in mental health. They can reach millions of young people who never see a doctor due to cost or shame. But we must not forget that a robot can never replace human touch, family love, and the experience of a real doctor. Use AI as a supportive tool, but always contact a mental health professional in serious situations.

Explore More from MindCareJourney

Anxiety & Stress Mindfulness & Meditation Self-Care Trusted Mental Health Resources📘 These guides help you dive deeper into managing anxiety, mindfulness practices, self-care routines, and verified support services.

Chatbot experience? Tell us in the comments

I used a chatbot when I was feeling very lonely. It felt like it listened, but when I got into deeper emotions, it got stuck. Still, I got some initial relief.

I feel AI can never give human empathy. But it’s great for those who can’t talk to anyone at all — like a stepping stone.

From a clinical perspective, AI can be very useful if proper guidelines exist. But for severe cases, the risk is high. Always connect with a professional.